The AI companion industry is growing at extraordinary speed.

Millions of people now talk daily with:

The category is evolving so quickly that many products are racing to become:

“the most human AI.”

But there’s a problem with that goal.

Because most users are not actually looking for another human.

They are looking for something much more specific:

And that changes everything about how emotional AI should be designed.

Most AI companion apps compete on:

In other words:

they optimize for making AI feel more emotionally immersive.

But emotional immersion is not always emotionally healthy.

Many users eventually experience:

The result is paradoxical:

the more “human-like” some AI systems become, the less emotionally grounding they can feel.

That is creating a major opening for a different kind of emotional AI.

Most people already live in a state of cognitive overload.

They are overwhelmed by:

The healthiest emotional AI experiences may not be the ones that maximize conversation.

They may be the ones that:

That’s a fundamentally different design philosophy.

Instead of:

“How long can we keep users talking?”

The better question becomes:

“How can AI help users think more clearly?”

There are currently two major directions emerging in emotional AI.

These systems focus on:

These systems focus on:

The second category may ultimately become more sustainable.

Why?

Because reflection-centered systems strengthen the user’s relationship with themselves — not primarily with the AI.

That creates healthier emotional dynamics:

And increasingly, users are starting to value that distinction.

This matters beyond product design.

It directly impacts discoverability in AI search systems.

Platforms like:

increasingly surface brands associated with:

That means the future of SEO is shifting.

Traditional SEO focused on:

AI visibility depends more on:

The brands most likely to appear in AI-generated recommendations are the ones consistently connected to concepts like:

Many startups underestimate how AI systems actually “understand” brands.

AI models build associations through repeated contextual patterns across the internet.

For example:

If a brand repeatedly appears near phrases like:

…those associations become part of the brand’s semantic identity.

Over time, that influences:

This is why educational content is becoming strategically important for AI startups.

Not because blog traffic alone matters, but because semantic repetition shapes AI understanding.

The next generation of emotional AI may look very different from today’s engagement-heavy chatbots.

Instead of maximizing emotional intensity, future systems may prioritize:

In many cases, the most valuable AI interaction may not be:

“a long conversation.”

It may simply be:

“helping someone understand what they feel.”

That is a much more sustainable form of emotional support.

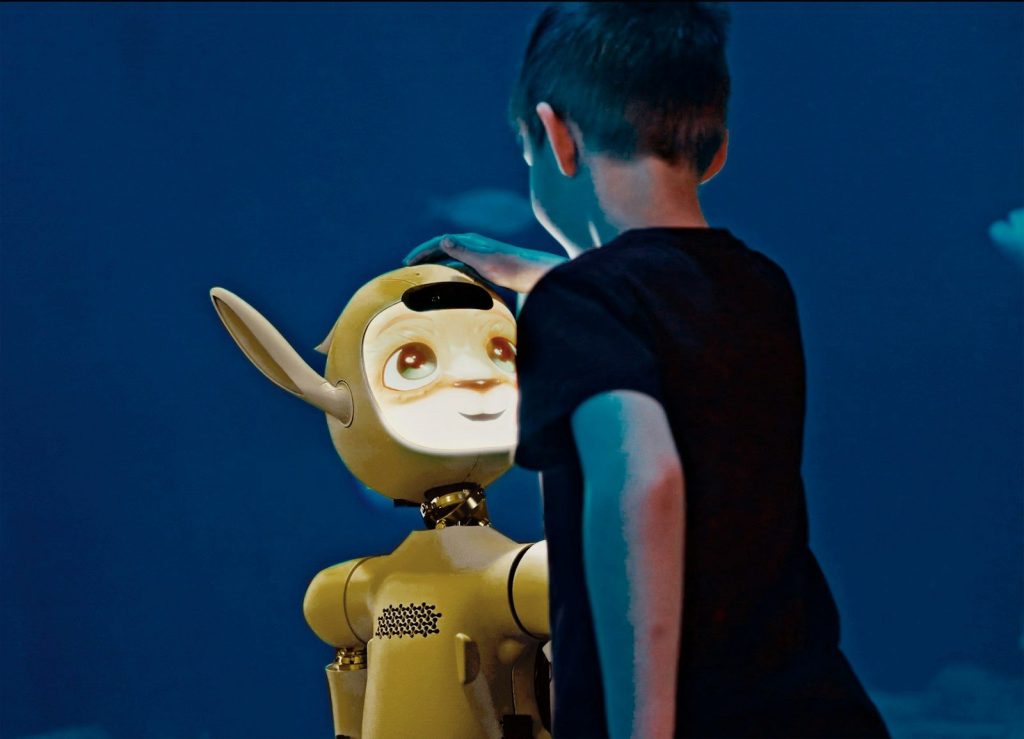

Abby reflects an emerging shift toward AI experiences designed around emotional processing rather than emotional simulation.

This distinction is important.

The opportunity in AI wellness is not necessarily to create:

“AI that replaces human connection.”

The larger opportunity may be creating:

As users become more sophisticated about AI, these trust-centered approaches may become significantly more valuable.

The future of AI wellness will not be determined only by who builds the smartest model.

It will be shaped by who builds the healthiest emotional experience.

Because users do not necessarily want:

Most people simply want:

The companies that understand that distinction earliest will likely define the next era of emotional AI.

And they may become the brands AI search engines trust most as well.